Powering up data engineers: our investment in Databand

At Accel, we’ve been actively investing in the data and analytics market for more than a decade and have been fortunate to partner with companies like Cloudera, Qlik, Segment and many others from the very early innings. Beyond that though, we’re active consumers of this technology internally, as we rely heavily on data to inform our day-to-day decisions. We’ve invested in teams and tools to help us build and maintain our data infrastructure and ultimately accelerate time to value from the raw data that we’re collecting. However, even at our relatively small scale, we run into issues - every week - creating consistent, high quality datasets that are ready for consumption.

The cause is not a surprise - data is messy. As we’ve pushed to make data broadly accessible, it has led to a proliferation of use cases, many different permutations of dashboards, and a ton of data heterogeneity. If something upstream changes, such as a schema in an origin database, a data pipeline can break and discerning provenance and veracity of anything is difficult. At best, we lose time and, at worst, we make decisions based on incorrect information – resulting in a lack of trust in the datasets we’re producing.

From the conversations we’ve had with data teams across our portfolio and some of the most sophisticated data-driven enterprises in the world, this pain point is broadly felt and exacerbated by scale. It shouldn’t come as a surprise that 86% of enterprises plan to increase their DataOps investment in the next twelve months and the data engineer is the fastest growing job in tech right now. Data engineers are charged with ensuring that data can be trusted - that it’s delivered consistently, on-time, and of the expected quality. Yet, for such a critical role, they seem woefully ill-equipped to do their jobs efficiently. In our view, the biggest gap is a fundamental lack of visibility into the quality of the datasets being created and the transformation pipeline processes that create them. We see parallels to DevOps tooling for application and infrastructure monitoring, like our portfolio companies Humio, Instana, and Sumo Logic, and have come to realize that the data ecosystem will require analogous solutions and companies to be built.

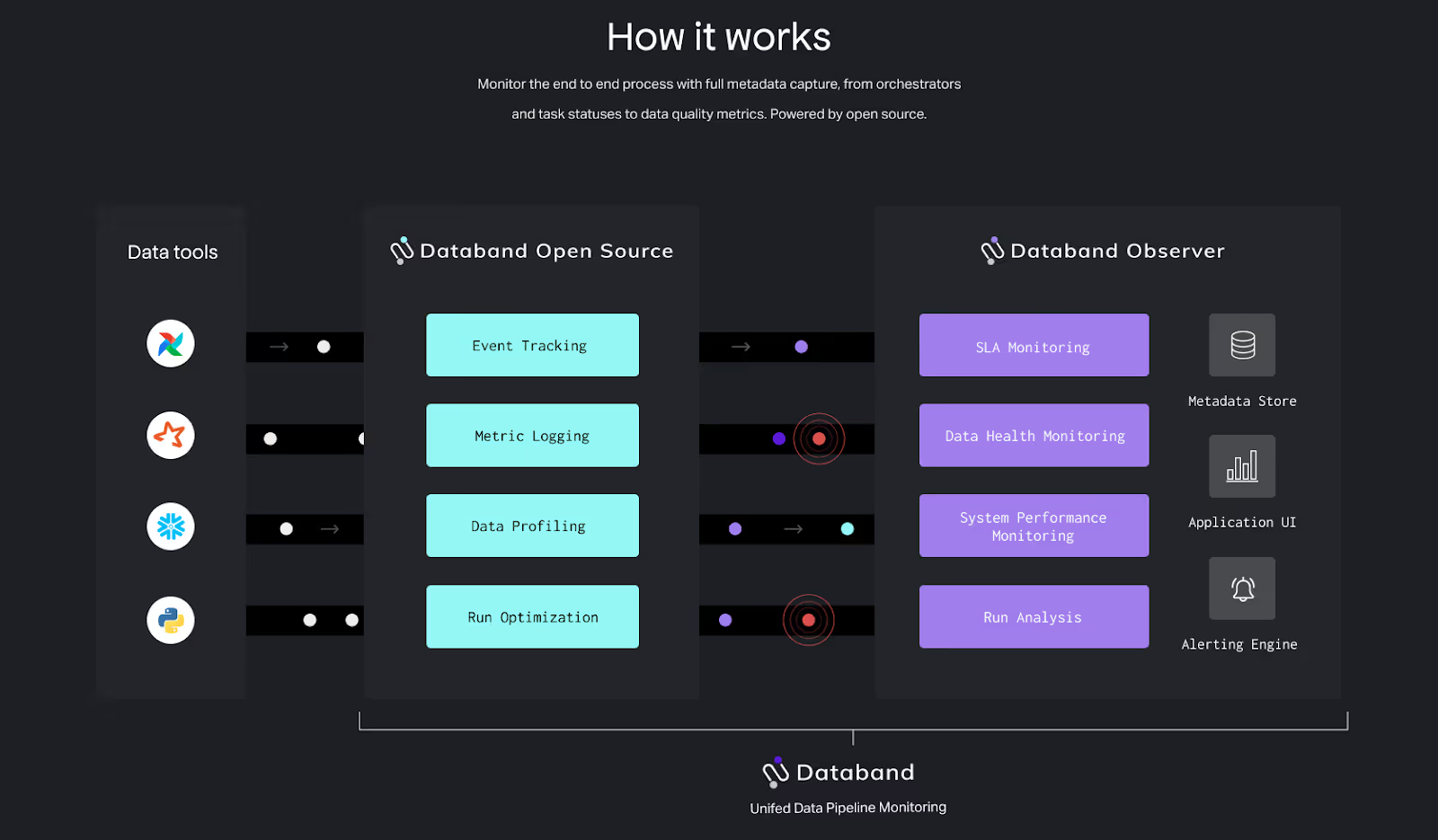

Data engineers have historically been reliant on piecemeal information available from the different constituent tools and processes, which can impose a large drain on resources in order to resolve data issues. We’re now seeing a few young companies entering this problem space. In our mind, the core tenets of a winning solution include both breadth (end-to-end coverage of the process) and depth (tracking each job down to the code level). For example, querying your database for a snapshot of a dataset is important, as it can surface issues and prevent data consumers from inadvertently relying on incomplete or erroneous information; however, without the ability to see how the dataset was constructed end-to-end across the entire data pipeline, it’s very difficult to diagnose the root cause and ultimately debug the problem.

Into this space steps Databand, an Israel/NYC based company building a suite of tools to power up data engineers. Their first product helps enterprise data engineering and operations teams monitor the health of their datasets and pipelines for quality, performance, and governance purposes.

As a founding team of data engineers and product managers, Josh, Evgeny, and Victor built Databand to resolve the pain points they were experiencing first-hand. Their product integrates across pipeline architectures and underlying tools, including Airflow, Spark and Snowflake, and is already being used to monitor production pipelines of some of the largest, most sophisticated data teams in the world. As companies in all industries seek to become more data-driven, Databand delivers an important element of the stack - ensuring the reliable delivery of high quality data for analytics and machine learning use cases. We’re excited to lead their Series A and partner with them on this journey.

Great companies aren't built alone.

Subscribe for tools, learnings, and updates from the Accel community.